These days many of us (if not most) are concerned with the role AI is going to play in our lives. Most concerns have been mostly about retaining our jobs, and lately – especially after AI may be blamed for military tragedies – the role of AI in conflicts, wars and “not wars”.

Humanity actually has spent the last century trying to predict exactly this. Pessimistic scenarios have been covered in pop-culture really well. Movies Terminator or Robocop don’t seem as unreal as they used to back in eighties, and exploit the fear of robots turning on their creators, what Asimov called the “Frankenstein complex.”

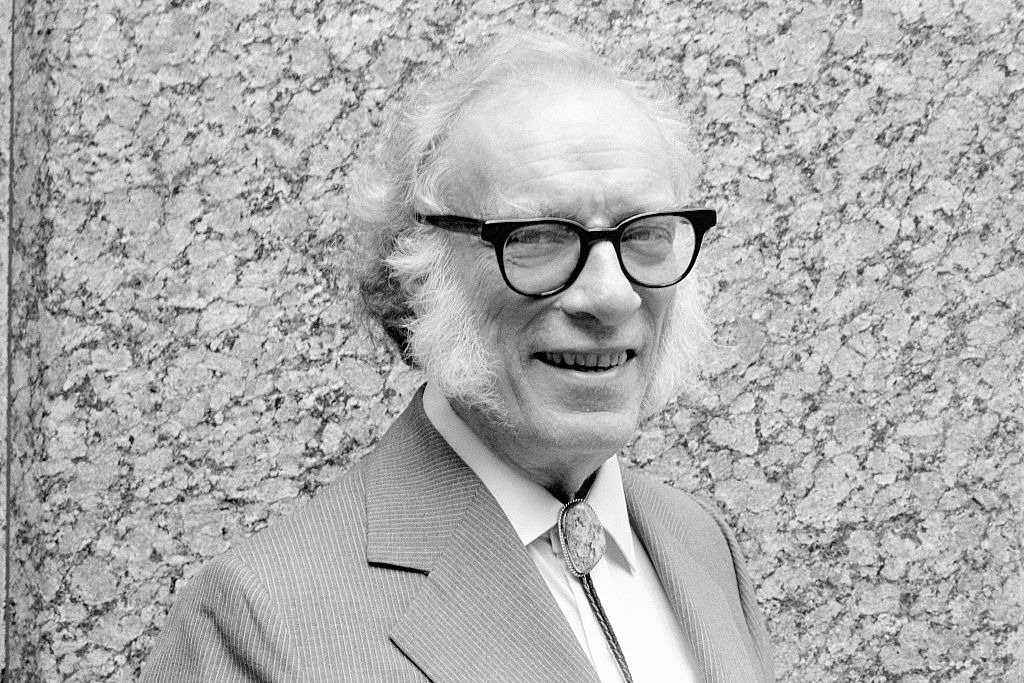

Optimistic scenarios, and the complex aspects of social adaptation required when robots take over traditionally human roles have been explored best in Asimov’s stories that I enjoyed reading back in school days.

In his Robot Series he (naively?) assumed that robots would have Three Laws hard-coded (compare to RAG, or system prompts). “A robot may not injure a human being or allow a human to come to harm” is the main one. I say that it’s the first attempt at what we now call “AI Alignment.” However, even in this optimistic scenario robots found ways to work around this obstacle.

He also assumed that the world and economy would be run by super-computers (the Machines), making sure that humans keep their jobs for the sake of stability. However the Machines would move humans to positions less impacted by their tendency to make errors.

In his “The Machine That Won the War” the super-computer is publicly credited with winning a major interstellar war, while in reality it was won by a general tossing a coin. I suspect that this one may be the closest to the reality ahead of us. It’s good time to (re-)read Asimov’s classics.

Leave a Reply